The capabilities of quantum computers are growing very fast and we expect to see announcements in the near future that a few real world applications have achieved quantum advantage, when an application can be processed faster or better or cheaper on a quantum computer than it could be done on a fast classical computer.

Some believe that when the technology reaches this state for a few initial examples, the technology will have reached an inflection point and there will be an avalanche of additional applications that will turn to quantum computing and result in a “Surging Growth” adoption rate as shown in the picture above.

We do not think this is the case. Our view is that quantum computing adoption over the next decade or so will be more like “Slow and Steady”. Let me explain why.

Every new technology has a class of users called “Early Adopters”. These are folks who are enthused about the technology and want to be among the first to take advantage of it. We expect that most of the initial end users who claim to have demonstrated a Quantum Advantage application will be people of this type. In some cases people may choose to use a quantum approach to solve a problem over classical computer even though both can provide similar results, just for the novelty of doing it.

However, the largest portion of a potential quantum computing user base is not like this. These potential end users of quantum computing include data scientists, researchers, CIOs, and others in enterprises who have day-to-day pressures and complex computational problems to solve. These folks will be reluctant, in most cases, to switch over to quantum computing unless the savings are very substantial. Their reasons include familiarity with an existing solution, no time to learn how to program quantum a computer, fear that the quantum solution may have a hidden flaw somewhere, belief that continued improvements in classical computing will nullify any quantum gains that may currently be present and hesitancy to throw out a working solution where they have already invested a lot of time and money.

We recently saw two presentations that can help explain why the adoption curve won’t look like a hockey stick.

Bob Sorenson from Hyperion Research presented an interesting study at the recent Q2B conference in December. He asked the question of QC buyers and users: What is the minimum application performance gain you would require to justify using quantum computing for your existing and planned workloads? Although you might think that if someone could show a 25% or 50% improvement over a classical solution that would be enough to get end users to switch over. But the data shows otherwise.

He received a response from 115 individuals in his survey with the following results:

| Performance Gain | Percent of Respondents |

| 2-5X | 7.8% |

| 5-10X | 12.2% |

| 10-50X | 22.6% |

| 50-100X | 26.1% |

| 100-250X | 9.6% |

| 250-500X | 2.6% |

| > 500X | 3.5% |

| Application only possible on a quantum computer | 15.7% |

Although this is still a relatively small sample and doesn’t distinguish between improvements in speed, solution quality, or cost, it is interesting nonetheless. The survey indicates that the majority of respondents would require a performance improvement of 50X or greater in order to switch.

In his presentation Bob pointed out (as we have also in some of our other articles) that classical computing is not standing still. Although Moore’s Law is slowing down, classical improvements are still regularly occurring in development of alternative non vonNeumann architectures, neuromorphic computing, increased parallelism and more efficient algorithms. So a 50X improvement in computation capabilities is about what the classical world will achieve within the next four years.

Another interesting presentation we saw was made by Matthias Troyer of Microsoft at QuCQC 2021 and titled “Achieving Practical Quantum Advantage in Chemistry Simulations”. The first thing he points out is that compared to classical technology, the quantum gates are very, very slow. His presentation calculates that a classical computer can process logical operations at a rate that is 10 orders of magnitude faster than a quantum computer. His presentation calculates that a state-of-the art classical GPU can process logical operations at a rate of 5 Petaops (5×1015 logical operations/second) versus a future quantum computer that he calculates would be able to support 2 Megaops (2×106 logical operations/second).

You might ask, if quantum computers are this slow, why is anyone considering quantum computing at all?

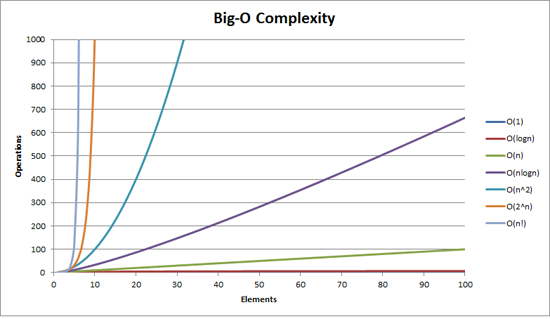

To understand this, you first need to understand Big-O complexity. Computer scientists rate algorithms for certain problems with a nomenclature called Big-O notation which describes how the run time on a computer will increase with the size of the problem. For example, in sorting algorithms a simple Bubble Sort would require O(n2) operations on average where n is the number of elements in the list. A more efficient Shell Sort would take O(n log n2) operations while an even more efficient Heap Sort would take O(n log n) operations. The shallower the slope that better the algorithm advantage when the number of elements gets very high.

So the answer as to why quantum computing can sometimes be better than classical can be seen in the chart below. The secret of quantum computers is that they can leverage the quantum principles of superposition and entanglement to enable new algorithms that have shallower Big-O complexity slopes.

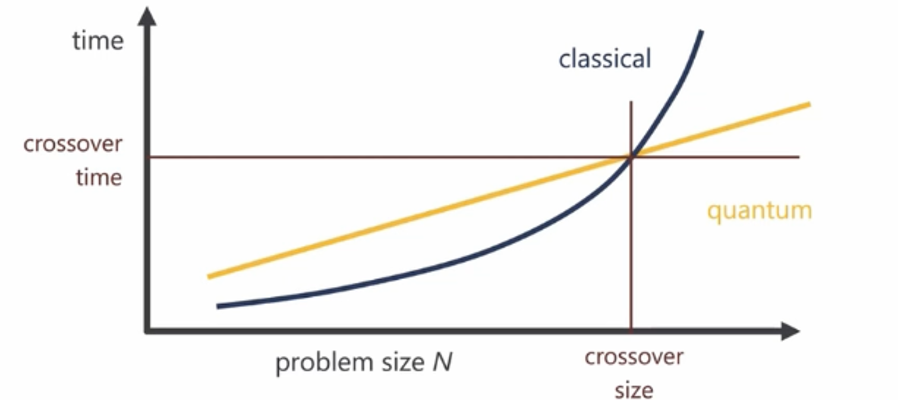

But getting back to the information that each logical operation in a quantum computer takes much, much longer to do than a logical operation in a classical computer, you need two things for quantum computing to pay off:

- You need to have a very large Big-O complexity advantage in a quantum algorithm versus a classical algorithm.

- You also need a large problem size in order to reach crossover (as shown in the curve above).

So putting together the data on logical operation speed and algorithm complexity along with an assumption that maximum run time for a program on either computer would be limited to two weeks, he made an interesting conclusion: A quadratic improvement in algorithm efficiency (e.g. N versus N2) would never pay off! One would need to have superquadratic or exponential scaling (e.g. N versus N3 or N4, 2N, etc.) for a quantum computer to complete a job faster than the classical computer within that two week limit.

One commonly taught algorithm is Grover’s search Algorithm which provides for a quadratic improvement (N1/2 versus N). So based on his conclusion Grover’s Algorithm will likely never be able to provide a quantum advantage for a production, real world application.

It turns out that Google also published a paper last year titled “Focus beyond quadratic speedups for error-corrected quantum advantage” with a similar conclusion. Their paper indicated that an algorithm should really have a quartic advantage (N versus N4) or possible a cubic advantage (N versus N3) for it to show promise of providing a superior quantum solution over classical.

So if you combine the information in these two presentations along with the knowledge that quantum computer hardware is a very difficult technology and that quantum computing software requires completely new algorithms and techniques that programmers need to learn how to use, we think the hurdles to quickly put this technology into production for commercial advantage (as opposed to proof of concept) are actually higher than some people think. And as a result, we conclude that quantum computing adoption rates will be what we call slow and steady for the next several years rather than the hockey stick growth rates we have seen with some of the classical computing companies such as Google or Facebook or Amazon.

February 27, 2021

Great analysis!

So even quantum computing needs to Cross the Chasm…

Yes. Even though quantum technology may utilize some new laws of physics, the laws of the marketplace will not be any different from those of classical computing.